Discussion

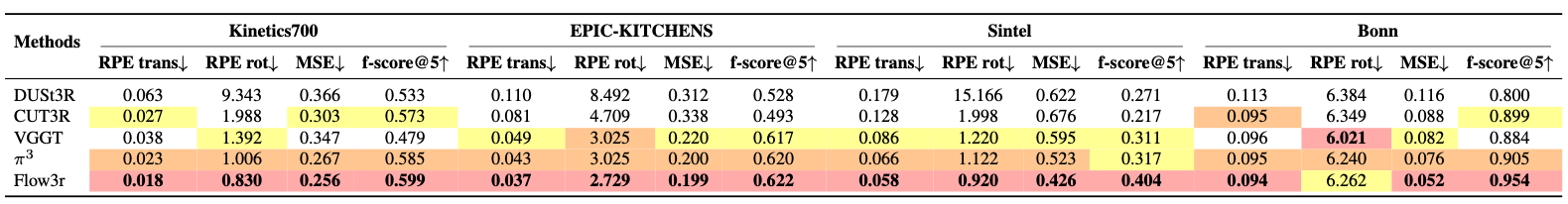

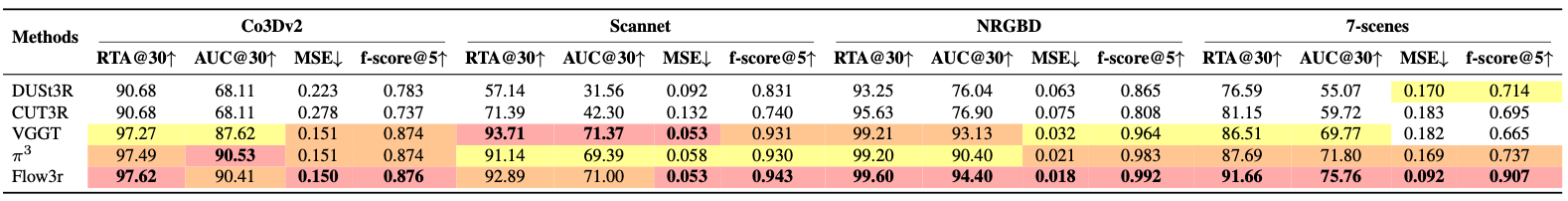

In this work, we present flow3r and demonstrate that it effectively leverages in-the-wild unlabeled data by introducing factored flow prediction, advancing visual geometry learning beyond existing fully supervised methods. While our approach opens up new possibilities, several challenges remain.

First, flow3r relies on off-the-shelf models to provide pseudo-ground-truth flow supervision, and there can be domains where such 2D prediction fails, limiting the performance upper bound of flow3r. Second, although our factored flow formulation elegantly handles dynamic scenes and enables flow supervision to improve the learning of both camera motion and scene geometry, flow3r may struggle under complex scenes with multiple moving independently components.

Finally, our current experiments operate at a moderate scale (~800K video sequences for flow supervision), and scaling to truly large-scale settings (~10-100M videos) presents an exciting but unexplored direction. While this is out of scope for our work due to computational constraints, we envision flow3r's formulation serving as a building block for future large-scale learning methods.